Details

-

Task

-

Resolution: Fixed

-

Major

-

4.1.1

-

None

-

Ubuntu, amd64, 3 nodes 4CPU x 4GB RAM

Description

There is a very simple scenario (synchronous sequential docs upsertion one-by-one) that causes ~90% GSI fragmentation without a chance to defragment it (server restart does not help).

How to reproduce (or "how to degrade index to 87.9% for 6 min with 20K items"):

1. 4.1.1-EE GA (5914), 3 nodes (4 CPU, 4 GB RAM)

2. All nodes have all services enabled

3. Establish a cluster with default bucket (2400M, full ejection, view index replica, i/o priority = high, flush=enable)

4. Run this code:

package highcpuafterload;

|

|

|

import com.couchbase.client.java.Bucket;

|

import com.couchbase.client.java.Cluster;

|

import com.couchbase.client.java.CouchbaseCluster;

|

import com.couchbase.client.java.document.JsonDocument;

|

import com.couchbase.client.java.document.json.JsonObject;

|

import com.couchbase.client.java.env.CouchbaseEnvironment;

|

import com.couchbase.client.java.env.DefaultCouchbaseEnvironment;

|

import com.couchbase.client.java.query.N1qlQuery;

|

import java.util.LinkedList;

|

import java.util.Scanner;

|

import java.util.concurrent.Phaser;

|

import java.util.concurrent.ThreadLocalRandom;

|

|

|

public class BombardaMaxima extends Thread {

|

|

|

|

private static final CouchbaseEnvironment ce;

|

private static final Cluster cluster;

|

private static final String bucket = "default";

|

|

|

// configure here

|

private static final int indexableInsertions = 1;

|

private static final int nonIndexableInsertions = 1;

|

private static final int totalRuns = 10000;

|

|

static {

|

ce = DefaultCouchbaseEnvironment.create();

|

final LinkedList<String> nodes = new LinkedList();

|

nodes.add("A.node");

|

nodes.add("B.node");

|

nodes.add("C.node");

|

cluster = CouchbaseCluster.create(ce, nodes);

|

final Bucket b = cluster.openBucket(bucket);

|

|

|

|

final String iQA = "CREATE INDEX iQA ON `default`(a, b) WHERE a is valued USING GSI";

|

final String iQX = "CREATE INDEX iQX ON `default`(a, c) WHERE a is valued USING GSI";

|

|

b.query(N1qlQuery.simple(iQA));

|

b.query(N1qlQuery.simple(iQX));

|

|

}

|

|

public static final JsonDocument makeDoc(String a, String b, String c) {

|

return JsonDocument

|

.create(

|

String.valueOf(ThreadLocalRandom.current().nextInt()),

|

JsonObject.empty()

|

.put(a, ThreadLocalRandom.current().nextInt())

|

.put(b, ThreadLocalRandom.current().nextInt())

|

.put(c, ThreadLocalRandom.current().nextInt())

|

);

|

}

|

public static final void makeFragmentation(Bucket b, int n , boolean pause) {

|

System.out.println("[" + n +"] inserting " + nonIndexableInsertions +" non-indexable documents");

|

for(int i = 0; i < nonIndexableInsertions; i++) b.upsert(makeDoc("x", "y", "z"));

|

if(pause) {

|

System.out.println("press 'Enter' after checking fragmentation rate ...");

|

Scanner s = new Scanner(System.in);

|

s.nextLine();

|

}

|

System.out.println("[" + n +"] inserting " + indexableInsertions + " indexable documents");

|

for(int i = 0; i < indexableInsertions; i++) b.upsert(makeDoc("a", "b", "c"));

|

System.out.println("... check fragmentation rate");

|

}

|

public static void main(String[] args) {

|

Bucket b = null;

|

b = cluster.openBucket(bucket);

|

for(int i = 0; i< totalRuns; i++) makeFragmentation(b, i, false);

|

}

|

}

|

|

5. Now check fragmentation rate, reboot, rerun code once again. All you'll got is just further index fragmentation.

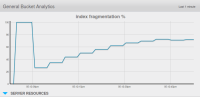

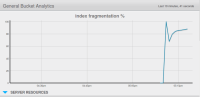

Below are my screenshots of "first 45 seconds", and "hourly" fragmentation stats.