Details

-

Bug

-

Resolution: Won't Fix

-

Major

-

None

-

1.6.0

-

None

Description

Related to CBG-317

gocb version: d46732ea85f0ca44f82842ca2996bd2a21995172

gocbcore version: 5905fd813f0ec718a59374725efaab4993560436

Background

Using gocb to connect to Couchbase Server using the "couchbases://" scheme (with the built-in self-signed cert on server side), and standard RBAC user/password bucket authentication. We're seeing a ton of "network error"s returned for various bucket ops in a QE environment when running Sync Gateway. This can reliably be reproduced by QE in one of their tests.

The gocb trace logging only gives us a little insight, but we don't understand why this is happening, and why it only seems to happen when connecting to Couchbase Server using TLS. If you change the scheme to "couchbase://", the problem goes away.

Unfortunately, given the connection to Couchbase Server is encrypted, we can't see what are in the packets between gocb and Couchbase Server, and I don't have the keys for the self-signed cert to decrypt the packets. It's difficult to tell what's going on.

Looking at a specific example in attached files

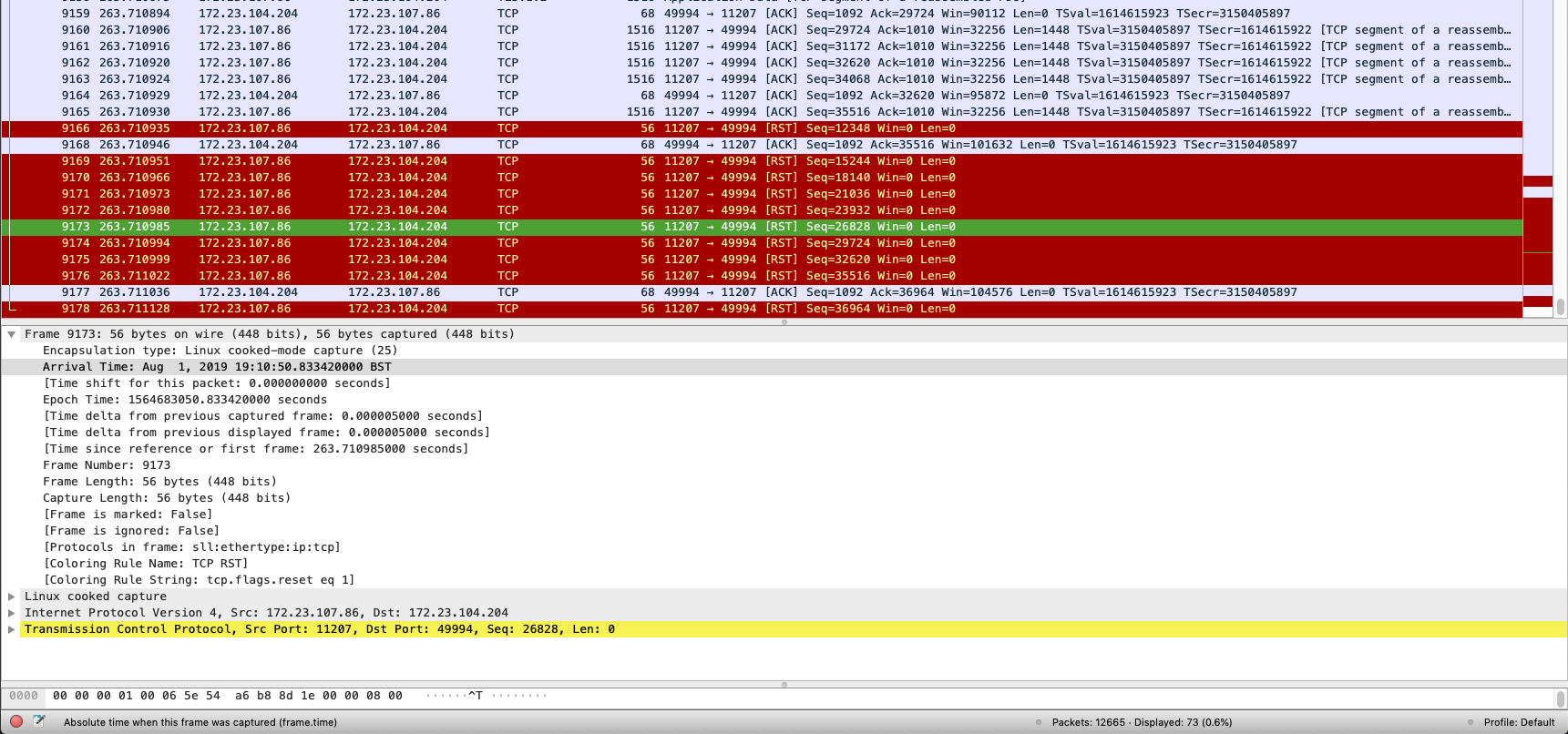

Looking closer at the pcap file in wireshark, with the filter "tcp.stream eq 43", and comparing this against the "sg_trace.log" entries around timestamp "2019-08-01T11:10:50.833-07:00", we can see some correlation between a bunch of TCP RST packets being sent from server to the client, and these "network error"s being returned to Sync Gateway by gocb.

From sg_trace.log

2019-08-01T11:10:50.833-07:00 [ERR] gocb: memdClient read failure: read tcp 172.23.104.204:49994->172.23.107.86:11207: read: connection reset by peer -- base.GoCBCoreLogger.Log() at logger_external.go:47

|

2019-08-01T11:10:50.833-07:00 [ERR] gocb: Failed to shut down client connection (write tcp 172.23.104.204:49994->172.23.107.86:11207: write: broken pipe) -- base.GoCBCoreLogger.Log() at logger_external.go:47

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` client died

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` closing consumer 0xc000dac8f0

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` fetching new consumer

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` waiting for client shutdown

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` received client shutdown notification

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline Client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` preparing for new client loop

|

2019-08-01T11:10:50.833-07:00 [TRC] Bucket: GetWithXattr("sg_3", ...) [4.458546ms]

|

2019-08-01T11:10:50.833-07:00 [TRC] gocb: Pipeline Client `s61401cnt72.sc.couchbase.com:11207/0xc000328200` retrieving new client connection for parent 0xc0002ee6e0

|

2019-08-01T11:10:50.833-07:00 [TRC] Bucket: Incr("_sync:seq", 1, 1, 0) [1.180804ms]

|

2019-08-01T11:10:50.833-07:00 [WRN] Error from Incr in sequence allocator (1) - attempt (1/3): network error -- db.(*sequenceAllocator).incrWithRetry() at sequence_allocator.go:112

|

Wireshark showing same timeframe

Questions:

1. We want to understand what these network errors are.

The gocb trace logs don't reveal too much, and neither does the raw packet capture.

There's a cbcollect attached, which may shed shome light.

2. Why is the pipeline client is dying/restarting a lot in general? Is it because of the network errors in 1?

If at all possible, we'd like some assistance on this issue from the SDK or server side! Thanks

Attachments

Issue Links

- blocks

-

CBG-317 network errors while getting bulk get with multi channel with server ssl enabled

-

- Closed

-