Details

-

Bug

-

Resolution: Fixed

-

Major

-

5.0.0

-

Untriaged

-

Unknown

Description

Build

5.0.0-2958

In addition to the following:

----------------------------------------------------------------

1. Started with a single node - kv + fts, (8GB RAM, 4 cores, 500GB SSD)

2. created a bucket called "dumps" with 2GB RAM and value eviction

3. Loaded "companies" and "yelp" datasets - total of 6.4M docs, some as large as 86K(yelp-reviews)

4. Active resident ratio went as low as 0.02% when I started getting OOMs and hard OOM error after which I added 2 more kv+fts nodes(same config as the first node. Rebalance started at 3:37:23 PM Thu Jun 1, 2017

5. And changed value eviction to full eviction on "dumps" and increased bucket quota to 3GB.

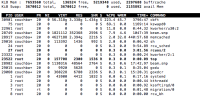

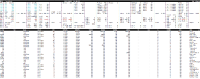

6. Loading completed and kv data was distributed among the nodes evenly and active resident ratio went up to 36.6% but rebalance was trudging along on the fts side and the pindexes on the first node were 6 and other two nodes had only 1 each. I did an atop on first node, please see attached screenshots showing very high virtual memory and disk usage.

7. I checked back at 8:30pm(5 hrs later) and rebalance was only 93% complete

8. It appears from logs that rebalance completed successfully at 1:56:30 AM Fri Jun 2, 2017 (10.5 hrs in all).

9. Indexing completed 100% by 11:00 AM(9 hrs later). Indexing rate had lonely spikes and was totally dependent on compaction, i.e,it appeared we were not indexing a steady stream of data at any point in time.

10. Did some more doc updates(only) and started to rebalance out one node (.216).

I see that doc count went down from 6.41M to 5.2M when rebalance is in progress. Rebalance out took 10hrs.

-----------------------------------------------------

I now added 2M more docs to the bucket

11. Indexing is verrry verryyyy slow. Like 10k docs in 30 mins or so and is very sporadic. There are loooong periods of idle time. I logged into the nodes and I see cbft using like 52GB virtual memory and 45-50% physical memory. 35-40% is used by memcached. I have logged a CBIT to add more memory to these nodes but it appears we must log this need for memory to alert the user.

Logs:

https://s3.amazonaws.com/cb-engineering/fts_no-ingestion/collectinfo-2017-06-07T190344-ns_1%40172.23.105.224.zip

https://s3.amazonaws.com/cb-engineering/fts_no-ingestion/collectinfo-2017-06-07T190344-ns_1%40172.23.106.120.zip