Details

-

Bug

-

Resolution: Fixed

-

Test Blocker

-

6.5.0

-

6.5.0-4471

-

Untriaged

-

Yes

Description

1. Created a 4 nodes cluster:

+----------------+----------+--------------+

|

| Nodes | Services | Status |

|

+----------------+----------+--------------+

|

| 172.23.105.220 | [u'kv'] | Cluster node |

|

| 172.23.105.221 | None | <--- IN --- |

|

| 172.23.105.223 | None | <--- IN --- |

|

| 172.23.105.225 | None | <--- IN --- |

|

+----------------+----------+--------------+

|

2. Create a bucket(replicas=2):

http://172.23.105.220:8091/pools/default/buckets with param: replicaIndex=0&maxTTL=0&flushEnabled=1&compressionMode=off&bucketType=membase&name=default&replicaNumber=2&ramQuotaMB=1424&threadsNumber=3&evictionPolicy=valueOnly

|

3. Loaded 250k items with durability=majority

+---------+---------+----------+-----+--------+------------+-----------+-----------+

|

| Bucket | Type | Replicas | TTL | Items | RAM Quota | RAM Used | Disk Used |

|

+---------+---------+----------+-----+--------+------------+-----------+-----------+

|

| default | membase | 2 | 0 | 250000 | 5972688896 | 232418608 | 134540993 |

|

+---------+---------+----------+-----+--------+------------+-----------+-----------+

|

4. Rebalance in 2 more nodes and load another 250k items:

+----------------+----------+--------------+

|

| Nodes | Services | Status |

|

+----------------+----------+--------------+

|

| 172.23.105.225 | [u'kv'] | Cluster node |

|

| 172.23.105.220 | [u'kv'] | Cluster node |

|

| 172.23.105.223 | [u'kv'] | Cluster node |

|

| 172.23.105.221 | [u'kv'] | Cluster node |

|

| 172.23.105.226 | None | <--- IN --- |

|

| 172.23.105.227 | None | <--- IN --- |

|

+----------------+----------+--------------+

|

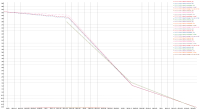

Observation: During the rebalance all the inserts were going fine with few DurabilityImpossibleException expected. As soon as rebalance is finished, within 2 minutes doc ops suddenly dropped and all requests started timing out.

Logs:

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.220.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.221.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.223.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.225.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.226.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/post_rebalance_requesttimeout/collectinfo-2019-10-08T150509-ns_1%40172.23.105.227.zip

This issue is not seen on 6.5.0-4380 which is the last weekly build.

Logs for 4380 build:

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.220.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.221.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.223.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.225.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.226.zip

https://cb-jira.s3.us-east-2.amazonaws.com/logs/6.5.0-4380/collectinfo-2019-10-08T154054-ns_1%40172.23.105.227.zip