Details

-

Bug

-

Resolution: Fixed

-

Major

-

7.0.0, 7.0.1, 7.0.2, 7.0.3

-

None

-

Triaged

-

1

-

Unknown

Description

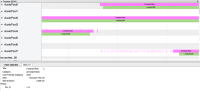

During investigation of MB-50389, it was observed that more Compaction tasks were concurrently running than expected. For example, when configuring a maximum of 2 Compaction tasks to run concurrently, the following Phosphor trace was seen:

Note how initially two compaction tasks were running at the same time, but in the highlighted area, this jumped up to 3.

The issue is how we handle Compaction tasks which cannot run because the vBucket is currently locked (e.g. by the Flusher). These tasks should re-scheduled to try compacting again - and indeed we do see this behaviour - for example if one zooms into the region around where the 3 concurrent compactors start, one can see a number of very short runtime compaction tasks - these are the attempts to compact a locked vBucket (514):

This in itself is fine, however note that shortly after these reties we see a 3rd CompactTask start on thread 0.

Digging into the Compaction scheduling code, the issue is that in the case of a CompactTask having to be re-scheduled due to a locked VB, we also wake up an additional CompactTask (assuming there are some waiting) - causing the limit to be exceeded.