Details

-

Bug

-

Resolution: Not a Bug

-

Major

-

7.1.0

-

Machines: AWS, m5.4xlarge (16vCPU, 64GB Memory, 1TB Disk) x 4

Cluster: 4 nodes - 3 KV, 1 Indexer

OS: Amazon Linux 2

Replicated on: 7.1.0-1831, 7.1.0-2284

-

Untriaged

-

1

-

Unknown

Description

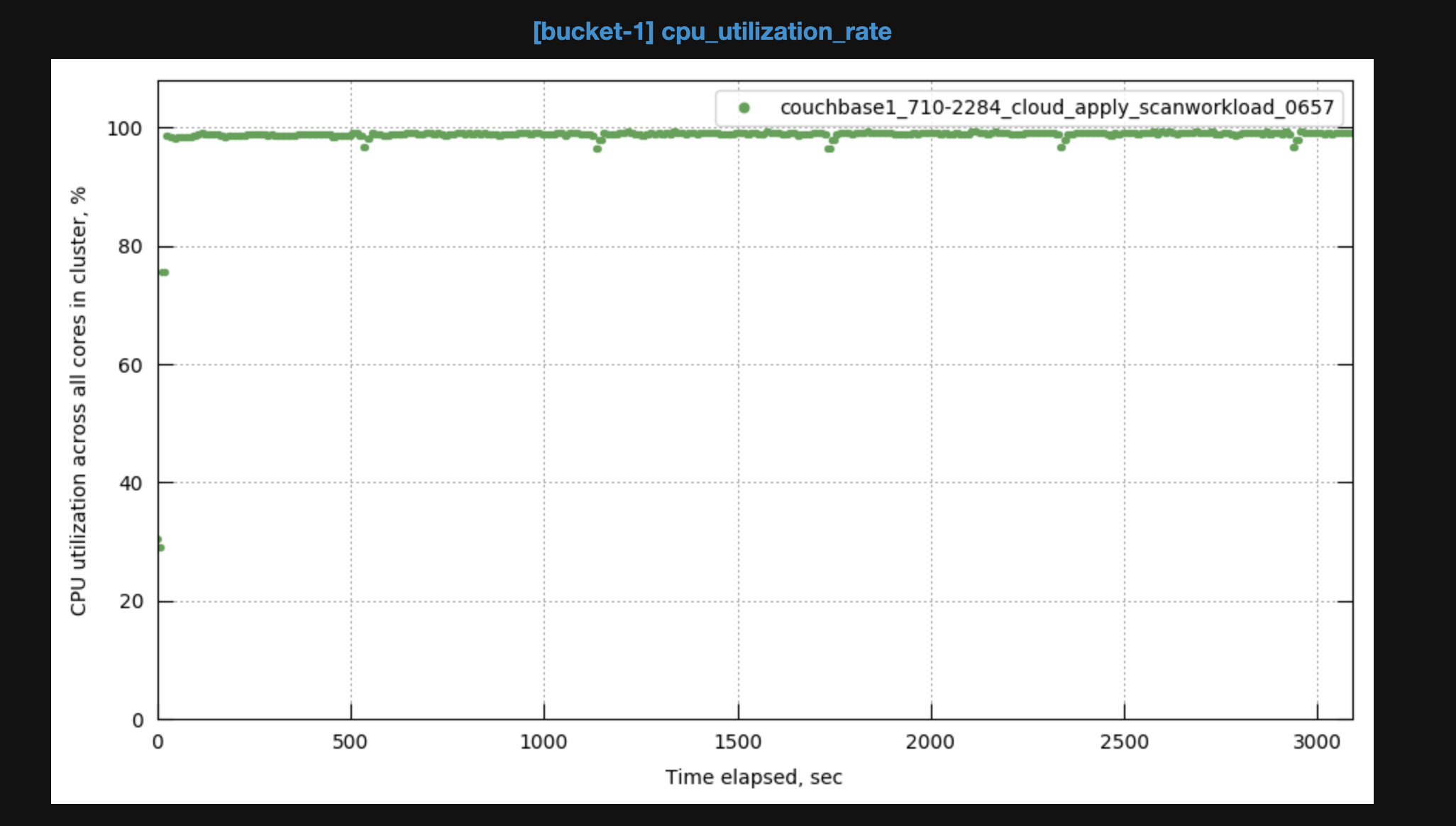

When running initial scan performance testing for Capella GSI, we are seeing the CPU thrashed on the index node, and running into issues with 'MetaKV' in the run:

Rev rpc URL

20:17:22 [ec2-3-238-21-92.compute-1.amazonaws.com] run: echo 'export CBAUTH_REVRPC_URL=http://Administrator:password@ec2-3-237-178-20.compute-1.amazonaws.com:8091/query' >> ~/.bashrc

|

|

|

|

|

20:30:49 2022-02-15T07:00:49 [INFO] To be applied: ./opt/couchbase/bin/cbindexperf -cluster ec2-3-237-178-20.compute-1.amazonaws.com:8091 -auth="Administrator:password" -configfile tests/gsi/new_plasma/config/config_scan_multiple_plasma.json -resultfile result.json -statsfile /root/statsfile -cpuprofile cpuprofile.prof -memprofile memprofile.prof -use_tls -cacert ./root.pem

|

15:50:50 [ec2-3-238-21-92.compute-1.amazonaws.com] out: 2022-02-15T15:50:50.252+00:00 [Error] Fail to get indexer version from metakv. Internal Error = Get /_metakv/indexing/info/versionToken: missing port in address 15:50:50 [ec2-3-238-21-92.compute-1.amazonaws.com] out: 2022-02-15T15:50:50.252+00:00 [Fatal] MetakvSet Failed to set /indexing/info/versionToken: Put /_metakv/indexing/info/versionToken: missing port in address |

The 7.1.0-1831 was run with

"Repeat": 1999999,

and

"Concurrency": 128,

http://perf.jenkins.couchbase.com/job/Cloud-Tester-Dev/251/

The 7.1.0-2284 was toned down to try and ease CPU pressure to:

"Repeat": 19999,

and

"Concurrency": 64,

but the CPU was still at around 100% usage for the duration of the scan phase, here is also the job for that run:

http://perf.jenkins.couchbase.com/job/Cloud-Tester-Dev/252/

Both jobs were able to generate cbmonitor reports as below, and the CPU usage can be seen:

7.1.0-2284

http://cbmonitor.sc.couchbase.com/reports/html/?snapshot=couchbase1_710-2284_cloud_apply_scanworkload_0657

I have also attatched result.json which doesn't seem to look out of the ordinary.

We use the exact same command for running these commands on both cloud and non-cloud testing, but it seems to only become an issue when running on cloud.